How to make a career change to product management?

10 de February, 2026The Role of Engineering Leadership in Product Vision and Strategy

25 de February, 2026In January, I wrote that resistance to AI in product development teams isn’t technical. It’s cultural, and it starts with leadership.

Since then, this topic has been accompanied by a set of interesting questions:

- How is AI impacting the organization of product teams?

- Do the three roles — engineering, design, and product management — remain relevant?

- Do the ratios still hold? One designer per product manager and five to nine engineers per product manager?

- What changes in the way teams work?

Short, direct, and honest answer: I don’t know. And anyone who claims to know is probably oversimplifying or giving incomplete answers. We’re building those answers in real time.

It’s still too early for definitive conclusions. But there are already enough signals to observe some practical effects. Not as consolidated trends, but as movements that are beginning to emerge in different contexts.

What follows are field observations: situations I’ve seen repeating, with variations, across companies of different sizes and industries.

When execution gets cheaper, the rest needs to reorganize

The most immediate effect of AI on product development has been an increase in execution speed. Writing initial code, generating variations of a solution, creating prototypes, consolidating research and interview data, or testing hypotheses has become faster in many contexts.

This doesn’t eliminate the work. But it changes the cost of doing certain things. And when the cost of execution changes, the rest of the system tends to reorganize around it.

For a long time, implementation capacity was one of the main constraints. There were always more ideas than the team could build. That meant team organization was designed around this scarcity: squads structured to deliver backlog, with engineering as the primary scarce resource.

In some places, that balance is starting to be challenged. Not because engineering is less important, but because individual productivity has increased. What used to take days can now take hours. What once required long cycles of refinement can now be tested much more quickly.

This doesn’t happen in every team, nor uniformly. But it happens enough to raise an important question: if building has become cheaper, where does the bottleneck move?

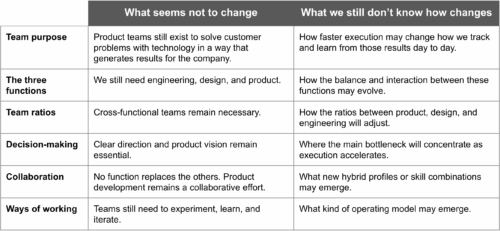

The bottleneck starts to shift

In recent conversations, I’ve heard something that would have been unlikely not long ago: engineering teams saying they can implement faster than decisions are being made.

This isn’t a widespread complaint or a universal diagnosis. But it shows up with increasing frequency. When the cost of testing a hypothesis drops, deciding what to test carries more weight.

This doesn’t mean product “becomes the bottleneck” or that the solution is simply to hire more PMs. But it does make the role of decision-making more visible: prioritizing, defining problems, choosing what not to build, providing clear direction. Things that were always important but were partially hidden behind the effort of implementation.

In one company I spoke with recently, the engineering team started generating multiple variations of the same solution in a short time. Meanwhile, the design process was still organized around refining a single version to pixel-perfect quality before any testing. The tension that emerged wasn’t about who was right, but about adjusting the way of working to a new reality of cost and speed.

When testing gets cheaper, the order of things may need to change.

Team ratios start to be questioned

For a long time, the classic product team setup involved one product person, one designer, and a larger group of engineers. In some contexts, five to nine engineers per PM.

This model was built in a scenario where implementation capacity was the main constraint. If engineering productivity increases, it’s natural that some companies start asking whether those ratios still make sense.

I haven’t seen radical or widespread changes. No one is dismantling squads or drastically shrinking engineering teams because of AI. But I am seeing discussions emerge.

In one case, the question was whether it still made sense to keep the same squad size when the ability to prototype and test had increased so much. In another, the reflection centered on the role of the product manager in a context where teams can test several alternatives quickly. In yet another, on how design fits when the cost of generating interface variations drops.

There are no single answers. But the fact that these questions are being asked already suggests that team organization is starting to be reconsidered.

AI as a tool for understanding systems

One use that keeps appearing, and perhaps gets less attention than it should, is AI as a tool for understanding existing systems.

In companies with older or complex codebases, AI tools are being used to:

- explain sections of code

- document implicit business rules

- answer questions about system behavior

- help product people validate logic without depending on a single engineer

This doesn’t replace deep knowledge or eliminate the need for seniority. But it changes how knowledge circulates. Things that used to be concentrated in a few people become more accessible.

In one team I spoke with, adopting these tools reduced the time needed to understand parts of the system that no one fully mastered. It didn’t solve everything, but it reduced reliance on individual knowledge and improved communication between product and engineering.

This kind of use doesn’t always show up in broader discussions about AI, but it has meaningful organizational implications.

Seniority and supervision

Another recurring pattern is the need for supervision and review. AI tools can significantly accelerate the work of less experienced people, but that doesn’t eliminate the need for review by more senior ones. In some cases, it increases it.

When generating code becomes easier, evaluating solution quality, defining architecture, and ensuring system coherence carry more weight. Teams that already had strong review and collaboration practices tend to adapt more smoothly. Teams that relied on more isolated contributions may find the transition more complex.

This isn’t exclusive to AI. But the speed at which things can now be generated makes the importance of certain roles more visible.

The three functions are still necessary

Amid these changes, one thing I haven’t seen disappear is the need for all three functions. We still need engineering to turn ideas into reliable systems, design to shape solutions and make them understandable and usable, and product to provide direction, choose problems, and align decisions with the results the company needs to achieve.

AI changes the nature of the work within these functions, but it doesn’t eliminate the need for them.

Once, a client told me that three engineers decided to build an internal system using vibe-coding tools. They expected to have something ready in a weekend. Three months later, the system still wasn’t up. The conversation we had was direct:

“Joca, we were three engineers building an internal product, but we were missing what you always talk about: a clear product vision. We didn’t know exactly what we were trying to build.”

The ability to build became faster. The need for direction didn’t decrease.

New arrangements start to appear

New arrangements are also starting to emerge within teams. In some contexts, people with more generalist profiles appear, capable of turning ideas into working prototypes quickly using AI tools. In others, specific initiatives are created to support AI adoption, whether as experimentation groups or as part of internal enablement programs.

These aren’t necessarily new formal roles. Often they’re adjustments within existing ones: engineers focusing more on architecture and integration, product people with more direct access to data and experimentation, designers exploring multiple variations faster.

These arrangements are still experimental. Each company is finding what makes sense for its context.

What changes when execution accelerates

Perhaps the most useful way to look at this isn’t to ask “how many people will a team have,” but rather “what starts to matter more when execution stops being the main constraint.”

If testing hypotheses becomes cheaper, choosing the right hypotheses carries more weight. If generating code becomes faster, the quality of architectural decisions carries more weight. If exploring alternatives becomes easier, clarity about the problem carries more weight.

None of this is new. But it becomes more visible.

In the previous article, I wrote that AI changes the type of work: less execution, more decision-making and strategy. What I’m starting to observe now is how that shift in the nature of work begins to reflect on team organization.

There’s still no clear new model. What we have are adjustments, experiments, and questions being asked.

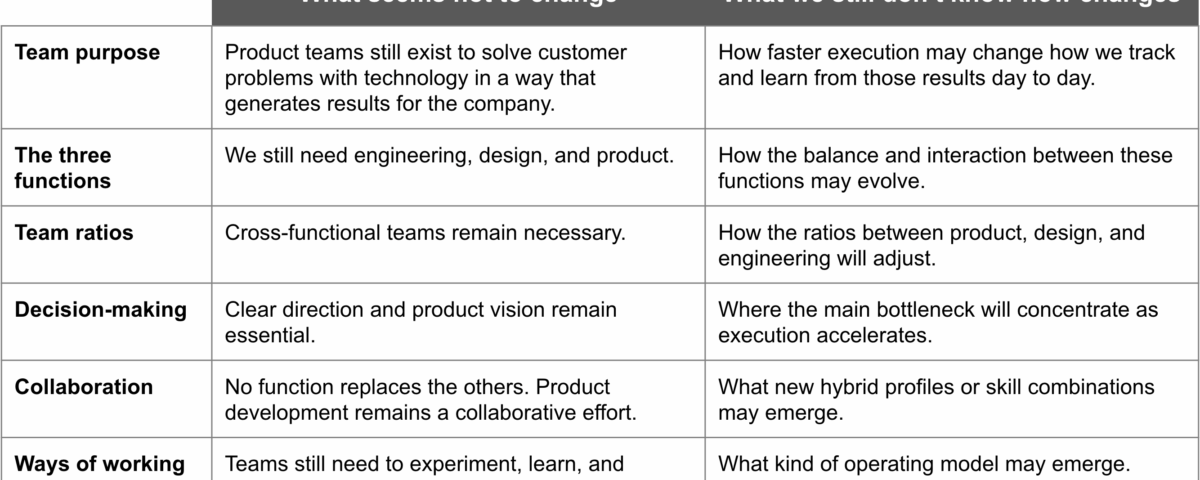

What seems stable and what we are still trying to understand

Below is a synthesis, based on my observations, of what seems stable and what we are still trying to understand as AI becomes part of the everyday work of product teams:

We are writing this story now

We’re still in a transition moment. In some companies, AI adoption is already part of everyday work. In others, it’s just beginning. In others, it’s still approached with caution.

The impact on team organization won’t be uniform or immediate. But there are already signs that faster execution is making the role of decision-making, architecture, and problem definition more visible.

Perhaps the most useful question right now isn’t what the “ideal” team structure will be, but how we treat team organization itself as something that also needs to be designed, tested, and adjusted.

If we build products through cycles of experimentation, we may need to approach team organization the same way: as a product in evolution. Every role adjustment, every ratio change, every experiment in ways of working helps us better understand how to organize people to fulfill the mission that has always been at the center: solving customer problems through technology in a way that generates results for the business.

We’re writing this story now. And, like any product in development, we’ll understand the design better as we experiment.

Workshops, coaching, and advisory services

I’ve been helping companies and their leaders (CPOs, heads of product, CTOs, CEOs, tech founders, and heads of digital transformation) bridge the gap between business and technology through workshops, coaching, and advisory services on product management and digital transformation.

Gyaco Podcasts

At Gyaco, we believe in the power of conversations to spark reflection and learning. That’s why we have “Product in Focus” (Produto em Pauta in Portuguese), a podcast that explores the world of product management from different angles:

- Mentoring Sessions: In this series, I share real mentoring conversations with product people. One person’s questions are often the questions of many. Together, we explore concrete challenges and turn experience into practical insights you can apply to your own context.

- Beyond the Buzzwords: In this series, Felipe Castro and I demystify product terms with real examples from our clients.

Available on YouTube and Spotify. Recorded in Portuguese, with English subtitles on YouTube.

Digital Product Management Books

Do you work with digital products? Do you want to know more about managing a digital product to increase its chances of success, solve its user’s problems, and achieve the company objectives? Check out my Digital Product Management books, where I share what I learned during my 30+ years of experience in creating and managing digital products:

- Digital transformation and product culture: How to put technology at the center of your company’s strategy

- Leading Product Development: The art and science of managing product teams

- Product Management: How to increase the chances of success of your digital product

- Startup Guide: How startups and established companies can create profitable digital products